Athena Federated Query enables users to execute queries that touch on a wide range of data sources, including data sitting in S3 as well as relational and non-relational databases in AWS. One of the key elements of that lakehouse strategy is Amazon Athena, which is AWS’s version of the Presto SQL query engine. The company started talking about its lakehouse architecture about a year ago. Google Cloud and Databricks have been early practitioners of data lakehouses, which are designed to provide a force for centralization and to reduce the data integration challenges that crop up when one allows data silos to proliferate widely. It’s hard to pinpoint exactly when AWS began adopting the data lakehouse design paradigm, in which characteristics of a data warehouse are implemented atop a data lake (hence the merger of a “lake” with a “house”). If you think these two belief systems are mutually exclusive, then perhaps you should learn more about AWS’s data lakehouse strategy. AWS also wants to help unify your data to ensure that insights don’t fall between the cracks. Data lake challenges are addressed in Deltalake which uses Apache Spark to update records in S3.Amazon Web Services wants you to create data silos to ensure you get the best performance when processing data. With Datalake, data is not meant to be updated in S3. Data of any structure and volume can be stored in datalake and can be queried either in Athena or Redshift or EMR from Glue data catalog. Data lake decouples the compute and storage and significant drop in cost. This constraints led the path for Data lake which is schema on read.

Also, compute and storage are coupled in Data warehouse which why it is expensive. So, not suitable for unstructured and semi structured data and machine learning.

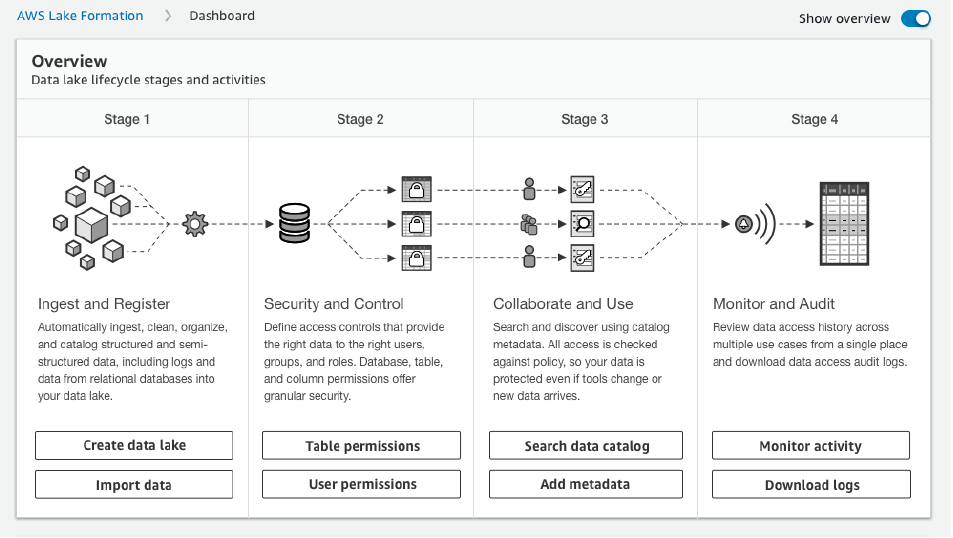

Traditional Data Warehouse arguably is one of the best for data analysis but it is schema on write. In a very short note, let's understand why Deltalake. Snowflake and Big Qeury are out of the scope of discussion. In AWS, Lakehouse can be built either in Redshift or S3 either by using native Spark or Glue service. Following diagram will provide an overview about architectures.Īs the diagram suggests, organization are working on building Lakehouse(datalake+data warehouse). Over the time, data model, architecture, compute and storage have changed. But there is no impact on the result.Īs every company is spending millions to get insights on data, it is now extremely imperative to improve the data quality which will add value to business. EMR has addressed this issue with EMR version 5.33 onwards. Note: While running the code using spark-submit, I experienced extraneous message on the console with EMR version 5.21. will cover this pipeline in another post. I have created the pipeline using AWS lambda which will basically create the EMR cluster and run the Deltalake code and terminate the clusters soon after the code is executed. Though this article will not encompass optimization rather will focus on creating Lakehouse in AWS using Spark Delta Lake. Aim was to compare it's performance with our existing AWS Redshift warehouse solution. Few months back, I was working on a POC on Deltalake using AWS EMR.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed